This week, my Studio 2 class have been working on their ‘Art Game’ brief. This is one of my favourite briefs of the trimester. My students must visit the Queensland Gallery of Modern Art, choose an artwork that speaks to them, and adapt this artwork into a videogame work over the course of a single week. I assess the term ‘adapt’ pretty loosely. They might consider how the meaning of the artwork is altered by the medium of videogames. They might find something interesting in the artist’s intentions or story that they want to draw from. They might find something in their own response to the artwork they want to explore. They might try to simply recreate the experience but in an interactive or navigable manner. All I really want from the project is for them to consider how art expresses ideas broadly and the relationship between videogames and other creative media. And for them to make some weird, experimental stuff. In the past, the brief has produced some really great works. The combination of the short turnaround and the ‘arty’ tone of the brief allows the students to just take risks and make something really out there. Here’s a collection of what they made.

As I did with Edge in a previous trimester, I also wanted to do this project myself. When we  went to GOMA, there was a small exhibition on perspective that included a range of interesting video works. One in particular really captured my attention: The Fall From Raiatea by Denis Beaubois, as part of the Terminal Vision project. For this work, five cathode-ray tube televisions sat side-by-side with fuzzy, distorted VHS footage on them. Each television shows footage from a different camera capturing the same event from different angles: the cameras themselves being hurled out the window of the 27th floor of an apartment building. At the start of the work, each camera is turned on, each TV flickering to life. Then Beaubois just sort of holds the rig of cameras out the window for a while, giving a real blurred look at the surrounding suburbs. This footage is already low quality, I assume, because when the cameras impacted the ground, the existing footage was affected as well. I like this idea of the future event impacting the current footage. Eventually, Beaubois flings the cameras from the window and we get this kaleidoscopic, vertigo-inducing sense as the cameras plummet to Earth, each facing a different direction.

went to GOMA, there was a small exhibition on perspective that included a range of interesting video works. One in particular really captured my attention: The Fall From Raiatea by Denis Beaubois, as part of the Terminal Vision project. For this work, five cathode-ray tube televisions sat side-by-side with fuzzy, distorted VHS footage on them. Each television shows footage from a different camera capturing the same event from different angles: the cameras themselves being hurled out the window of the 27th floor of an apartment building. At the start of the work, each camera is turned on, each TV flickering to life. Then Beaubois just sort of holds the rig of cameras out the window for a while, giving a real blurred look at the surrounding suburbs. This footage is already low quality, I assume, because when the cameras impacted the ground, the existing footage was affected as well. I like this idea of the future event impacting the current footage. Eventually, Beaubois flings the cameras from the window and we get this kaleidoscopic, vertigo-inducing sense as the cameras plummet to Earth, each facing a different direction.

But it is once they land that the video is particularly interesting. The footage becomes more messed up as the cameras are clearly broken at this point. The images flicker, distort colour, and get smothered with grain and noise. Each camera frames the surrounding buildings and gardens in a way that could not have been predetermined by the artist. There is a randomness in where each camera landed, what direction it settles on, and in what ways it breaks. As a viewer, we are stuck with this view for a prolonged period of time until the artist makes their way down from the 27th floor and finds each camera. While watching the distorted, angled cameras, this is what I most thought about: the artist, somewhere off-screen, making their way down the stairs and then searching one trying to figure out just where each camera landed.

Beaubois was exploring a range of ideas with this work which don’t feel necessarily connected to each other. Primarily, the work seems to speak to the medium of video itself. The way that when we have a crisp, clean picture we look through the picture instead of at it. Glitches, grain, and distortion disrupt this by drawing attention to the medium itself, calling into question its claims to authenticity as a perfect ‘medium’ onto the world. This questioning of the authenticity of video is doubled by the multiple cameras looking in different directions, highlighting how there is no single, objective view on any one event but instead viewing is itself always situated, always framing events in a particular way. With my whole background in phenomenology and embodiment, there is also a striking parallel with notions from the likes of Haraway and de Certeau of the difference between seemingly objective ‘views from above’ and subjective ‘views from below’. de Certeau in particular contrasts the different experiences of New York from atop the Empire State Building and down on its streets. These two views (from above, from below) are transitioned between quite violently in The Fall From Raiatea.

Beaubois was exploring a range of ideas with this work which don’t feel necessarily connected to each other. Primarily, the work seems to speak to the medium of video itself. The way that when we have a crisp, clean picture we look through the picture instead of at it. Glitches, grain, and distortion disrupt this by drawing attention to the medium itself, calling into question its claims to authenticity as a perfect ‘medium’ onto the world. This questioning of the authenticity of video is doubled by the multiple cameras looking in different directions, highlighting how there is no single, objective view on any one event but instead viewing is itself always situated, always framing events in a particular way. With my whole background in phenomenology and embodiment, there is also a striking parallel with notions from the likes of Haraway and de Certeau of the difference between seemingly objective ‘views from above’ and subjective ‘views from below’. de Certeau in particular contrasts the different experiences of New York from atop the Empire State Building and down on its streets. These two views (from above, from below) are transitioned between quite violently in The Fall From Raiatea.

Beaubois is also apparently alluding to the seeing technologies of war, and the disposability of cameras attached to cruise missiles. But it was the themes around the materiality and authenticity of video that I was most intrigued by and wanted to explore in my own adaptation of this work. I also wanted to create something that spoke to that sense of temporality that really resonated with me, that having to wait for the artist to come and pick up the cameras—your own view on this world—that has been carelessly hurled away.

The way I could do this came to me even as I watched the the videos in GOMA. Have the player looking at the televisions, just as I was, but also have the player throw the cameras themselves and then take the journey down from the building to locate and pick up each camera. Perhaps I could add a sixth television that offered a first-person view. This way, the player could have that dual-embodiment of both artwork viewer and artist at the same time. Taking on two positions, two roles, at the same time. That seemed very much in-line with what the artwork was going for.

The most important thing to get working would be the cameras themselves breaking. I had seen different image effect scripts that could make a camera look like it was glitching out, such as in Adrian Forest’s Road Trip. If I could make a camera glitch out the way I wanted it to, everything else would be relatively straightforward. So I grabbed a bunch of different scripts from the Asset Store and GitHub, mucked around with them, and figured out what would work together and what wouldn’t. Through a combination of Keijiro Takahashi’s Glitch script, KillaMaaki’s Retro TV Effects, and Staffan Tan’s VHS Glitch script I got a general effect I was pretty happy with.

But then I needed to make it so the cameras became more messed up each time they were thrown, but not in an orderly or linear fashion. If each television flickered in the exact same way, then that would look incredibly weird and artificial. The approach I took was quite complicated to put together, but quite straightforward in its logic. For each script, I found the variables I wanted to change. I set a script so that each time a camera hit the ground, a ‘maximum’ value for each of the variables was incremented by a random increment amount. Then, a timer would set the variable to a random value between 0 and the current maximum value of that variable every X seconds. So, for example, perhaps the bloom intensity could be a maximum of 1 but then, after a camera hits the ground, the maximum is, say, 2.6. Now, every 15 seconds or so, the bloom is set to a random value between 0 and 2.6, instead of between 0 and 1. Some of these values were lerped smoothly, others jumped abruptly. So rather than the images simply getting more distorted in a linear manner, they flicker between different intensities so as to give flickering glimpses of the world beyond. Throw them enough times, though, and they are likely to get so distorted as to be near incoherent.

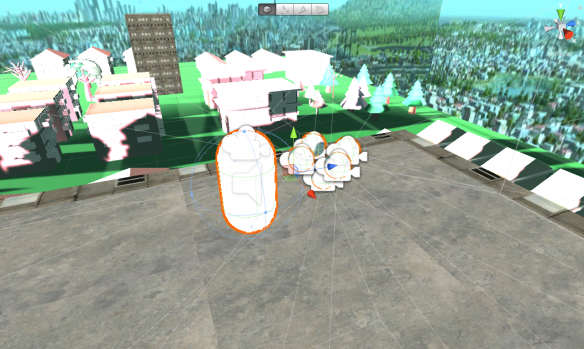

The cameras themselves are simply childed to the player’s first-person controller, each rotated to look in a different direction. When the player clicks the mouse button, each camera is un-childed and goes shooting ‘forward’ relative to its current angle (except the rear camera, which goes ‘backwards’ instead of just hitting the player). When the player picks them up again, they get re-childed and set back to their original rotation, ready to be thrown again.

The player and camera rig setup

The screens themselves are just simply cubes with Render Textures on them, so each camera’s footage is rendered on each cube, and then a ‘main’ camera looks at these cubes. I wanted the cubes to emit a glow like a television set, but I couldn’t get this to work without rendering the screen itself practically white.

Early prototype of the render textures.

It was important for their to be a long delay between throws and for the player to have to actually walk up and down the tower stairs. So I decided to make it so the cameras could only be thrown from the top of the tower. Further, I disabled the jump button and implemented an invisible wall so the player couldn’t simply jump off and grab the cameras right away. This makes the game more tedious, but also better gets the experience I was going for. I want the player forced to just look at the frames of the thrown cameras for a while before they throw again. Still, I’m concerned this means players will only throw the cameras once or twice before just closing the game. More work could have been done to balance this.

All that was left at this point was to create all the assets. 3D assets isn’t something I have much experience in, beyond the simple buildings in Town, and the one week I had to make this game was not long enough to learn. I decided it was finally time to give ProBuilder a crack. I created a very simple rectangular tower with stairs spiralling down it. This worked for the tower I wanted the player to throw the cameras off, but what the player looked at from this tower and from the chucked cameras needed to be a bit more detailed than a rectangular prism.

I found a really interesting tool on the Asset Store to create procedurally generated cities from procedurally generated building and trees. I didn’t get it working perfectly, but I did get it to make some houses and trees, and then I just placed these on a plane and duplicated the plane a few times. It’s not particularly pretty, but it is sufficient for what the player is actually capable of seeing through the small screens. I then just found some images of a cityscape (I think it is actual a screenshot of Cities XL) for my skybox. Again, it isn’t pretty, but it is sufficient considering how poorly detailed the screens are the player views it through.

The game scene. The tall towers were created in Probuilder.

Audio is something I would have liked to have been more elegant. In the actual artwork, the audio noise was dynamic and really strongly amplified the materiality of the work, throbbing in and out with the distortion. I didn’t have time to achieve anything this clever, and instead simply placed a looping static noise on each camera, with the volume relative to some of the visual effects. I also found some dull thud noises for when the cameras land.

I am real happy with how this game came together, but working on it, especially in the final few days, was actually incredibly frustrating and demotivating. My development process was less than ideal. I started with a test scene to get some stuff working, and then continued to just chuck stuff in until, suddenly, my test scene was my actual game. What this meant was that my folder organisation was atrocious and, further, I didn’t use prefabs. Prefabs are objects of which you can create instances in your game. So, for instance, bullets or enemies are things you would want to use prefabs for. Then, if you want to change your enemies’ health, you can just change the prefab instead of every single enemy individually. I didn’t use prefabs in this game, when I should have for the cameras and the screens, This made the smallest tweaks late in the project incredibly tedious to make, and simply navigating the project in Unity was a frustrating experience. It was a mess, and I was keen to just get away from it. This highlights the importance of taking the time to keep a project neat, even when it is a small, rough project.

This was also the first game I’ve created since buying a relatively powerful PC. I did a lot of my development on this PC, when usually I would use my Macbook Air. Because of this, it was only once I was done that I realised how poorly it ran on less-powerful devices, because the computer I was using was powerful. If I had bothered to test it on my macbook sooner, I would have considered finding ways to switch the different image effects on and off to help optimise it. Instead, I didn’t realise there was a problem until it was too late.

Overall, I’m incredibly happy with the final version of this game in terms of how it adapts and speaks to the original artwork. I think I’ve captured something really interesting in the relationship between the-player-as-actor and the-player-as-viewer. I’m less happy with the game on a technical and process level, but at least I now know how not to screw up in these ways for the future.

You can find the game on my itch.io page, here.

You must be logged in to post a comment.